Meeting Notes July 2023

Meeting 06/07

Research

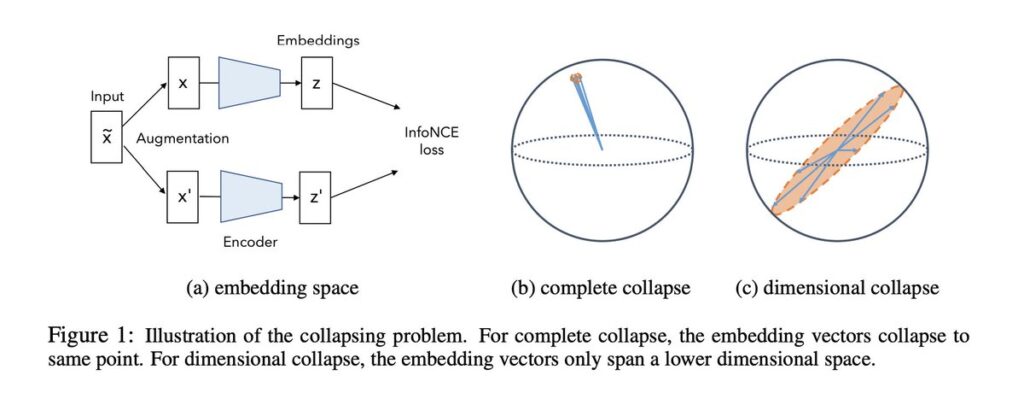

- Investigating Representation Collapse in Reinforcement Learning Agents from Vision

- plan/structure?

- what RL algorithms?

- visual data

- Gehart Neumann, Marc Toussaint, Joustus Piater (Innsburg)

- Define a research question

- Focus on some domain

- Unnormalized Contrastive learning

- All CL models use l2 normalization of the representation

-

Stability: Normalizing the representations ensures that they all have the same magnitude. This can make the learning process more stable, as it prevents the model from assigning arbitrarily large or small magnitudes to the representations.

-

Focus on direction: By constraining the representations to have a fixed magnitude, the learning process focuses on the direction of the vectors in the embedding space. This is often what we care about in tasks like contrastive learning, where the goal is to make the representations of similar inputs point in similar directions.

-

Computational convenience: As mentioned earlier, many computations, such as the dot product between two vectors, are easier to perform and interpret in normalized spaces.

-

Interpretability: Normalized representations are often more interpretable, as the angle between two vectors can be directly interpreted as a measure of similarity or dissimilarity.

-

- BUT, this come to the expense of

- Decreased Capacity: With normalization, the model’s capacity to represent data is reduced since it can only rely on the direction of vectors in the embedding space. This limitation may result in the model being less able to capture complex patterns in the data.

- All CL models use l2 normalization of the representation

-

-

- Missing Magnitude Information: The absence of magnitude information in normalized vectors removes the ability to convey meaningful data properties such as confidence levels or other relevant characteristics. Normalization discards this information, limiting the model’s understanding of the data.

- IDEA: remove the l2 regularization

- Regularize the model to penalize large magnitudes.

- Scale the representations to a desired range.

- Design a custom loss function considering both direction and magnitude

-

- Breaking Binary: Towards a Continuum of Conceptual Similarities in Self-Supervised Learning

- will take more time to set-up

- will leave it for later

PhD Registration

- registered

M.Sc. Students/Interns

- Iye Szin presenting next week her work until now.

ML Course

- Publish Video Tutorial on pytorch

Miscellaneous

- Summer School in Cambridge

- Poster?

Meeting 25/07

Research

- Goal-oriented working mode:

- define subgoals and milestones

- (make sure that you can evaluate them, and define criteria of success, scores, etc.)

- till 17.08.2023 10:00

- Define topic, sub-problem, open challenge, your approach, toy task, full experiment

- RAAD2024, 20.12.2023 concept paper with first results

- Spring 2024 A+ robotics conference paper on simulation experiments.

- Summer 2024 A+ robotics paper on real robot experiments

M.Sc. Students/Interns

Miscellaneous