Publications

Publication List with Images

2024 |

|

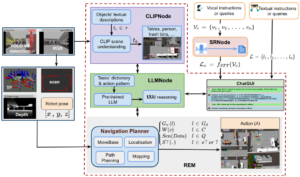

Nwankwo, Linus; Rueckert, Elmar In Workshop of the 2024 ACM/IEEE International Conference on HumanRobot Interaction (HRI ’24 Workshop), March 11–14, 2024, Boulder, CO, USA. ACM, New York, NY, USA, 2024. Abstract | Links | BibTeX | Tags: Autonomous Navigation, Human-Robot Interaction, Large Language Models, mobile navigation @conference{Nwankwo2024MultimodalHA,In this paper, we extended the method proposed in [17] to enable humans to interact naturally with autonomous agents through vocal and textual conversations. Our extended method exploits the inherent capabilities of pre-trained large language models (LLMs), multimodal visual language models (VLMs), and speech recognition (SR) models to decode the high-level natural language conversations and semantic understanding of the robot's task environment, and abstract them to the robot's actionable commands or queries. We performed a quantitative evaluation of our framework's natural vocal conversation understanding with participants from different racial backgrounds and English language accents. The participants interacted with the robot using both vocal and textual instructional commands. Based on the logged interaction data, our framework achieved 87.55% vocal commands decoding accuracy, 86.27% commands execution success, and an average latency of 0.89 seconds from receiving the participants' vocal chat commands to initiating the robot’s actual physical action. The video demonstrations of this paper can be found at https://linusnep.github.io/MTCC-IRoNL/ |  |

Nwankwo, Linus; Rueckert, Elmar The Conversation is the Command: Interacting with Real-World Autonomous Robots Through Natural Language Proceedings Article In: HRI '24: Companion of the 2024 ACM/IEEE International Conference on Human-Robot Interaction., pp. 808–812, ACM/IEEE Association for Computing Machinery, New York, NY, USA, 2024, ISBN: 9798400703232, (Published as late breaking results. Supplementary video: https://cloud.cps.unileoben.ac.at/index.php/s/fRE9XMosWDtJ339 ). Abstract | Links | BibTeX | Tags: Autonomous Navigation, Large Language Models @inproceedings{Nwankwo2024,In recent years, autonomous agents have surged in real-world environments such as our homes, offices, and public spaces. However, natural human-robot interaction remains a key challenge. In this paper, we introduce an approach that synergistically exploits the capabilities of large language models (LLMs) and multimodal vision-language models (VLMs) to enable humans to interact naturally with autonomous robots through conversational dialogue. We leveraged the LLMs to decode the high-level natural language instructions from humans and abstract them into precise robot actionable commands or queries. Further, we utilised the VLMs to provide a visual and semantic understanding of the robot's task environment. Our results with 99.13% command recognition accuracy and 97.96% commands execution success show that our approach can enhance human-robot interaction in real-world applications. The video demonstrations of this paper can be found at https://osf.io/wzyf6 and the code is available at our GitHub repository. |  |

Compact List without Images

Conferences |

Nwankwo, Linus; Rueckert, Elmar In Workshop of the 2024 ACM/IEEE International Conference on HumanRobot Interaction (HRI ’24 Workshop), March 11–14, 2024, Boulder, CO, USA. ACM, New York, NY, USA, 2024. @conference{Nwankwo2024MultimodalHA,In this paper, we extended the method proposed in [17] to enable humans to interact naturally with autonomous agents through vocal and textual conversations. Our extended method exploits the inherent capabilities of pre-trained large language models (LLMs), multimodal visual language models (VLMs), and speech recognition (SR) models to decode the high-level natural language conversations and semantic understanding of the robot's task environment, and abstract them to the robot's actionable commands or queries. We performed a quantitative evaluation of our framework's natural vocal conversation understanding with participants from different racial backgrounds and English language accents. The participants interacted with the robot using both vocal and textual instructional commands. Based on the logged interaction data, our framework achieved 87.55% vocal commands decoding accuracy, 86.27% commands execution success, and an average latency of 0.89 seconds from receiving the participants' vocal chat commands to initiating the robot’s actual physical action. The video demonstrations of this paper can be found at https://linusnep.github.io/MTCC-IRoNL/ |

Proceedings Articles |

Nwankwo, Linus; Rueckert, Elmar The Conversation is the Command: Interacting with Real-World Autonomous Robots Through Natural Language Proceedings Article In: HRI '24: Companion of the 2024 ACM/IEEE International Conference on Human-Robot Interaction., pp. 808–812, ACM/IEEE Association for Computing Machinery, New York, NY, USA, 2024, ISBN: 9798400703232, (Published as late breaking results. Supplementary video: https://cloud.cps.unileoben.ac.at/index.php/s/fRE9XMosWDtJ339 ). @inproceedings{Nwankwo2024,In recent years, autonomous agents have surged in real-world environments such as our homes, offices, and public spaces. However, natural human-robot interaction remains a key challenge. In this paper, we introduce an approach that synergistically exploits the capabilities of large language models (LLMs) and multimodal vision-language models (VLMs) to enable humans to interact naturally with autonomous robots through conversational dialogue. We leveraged the LLMs to decode the high-level natural language instructions from humans and abstract them into precise robot actionable commands or queries. Further, we utilised the VLMs to provide a visual and semantic understanding of the robot's task environment. Our results with 99.13% command recognition accuracy and 97.96% commands execution success show that our approach can enhance human-robot interaction in real-world applications. The video demonstrations of this paper can be found at https://osf.io/wzyf6 and the code is available at our GitHub repository. |