Publication List with Images

2025 |

|

Nwankwo, Linus; Ellensohn, Bjoern; Dave, Vedant; Hofer, Peter; Forstner, Jan; Villneuve, Marlene; Galler, Robert; Rueckert, Elmar EnvoDat: A Large-Scale Multisensory Dataset for Robotic Spatial Awareness and Semantic Reasoning in Heterogeneous Environments Proceedings Article In: IEEE International Conference on Robotics and Automation (ICRA 2025)., 2025. Links | BibTeX | Tags: Autonomous Navigation, robotics, SLAM @inproceedings{Nwankwo2025, |  |

2024 |

|

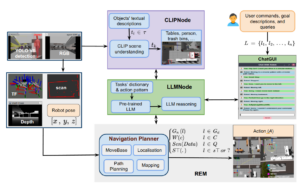

Nwankwo, Linus; Rueckert, Elmar The Conversation is the Command: Interacting with Real-World Autonomous Robots Through Natural Language Proceedings Article In: HRI '24: Companion of the 2024 ACM/IEEE International Conference on Human-Robot Interaction., pp. 808–812, ACM/IEEE Association for Computing Machinery, New York, NY, USA, 2024, ISBN: 9798400703232, (Published as late breaking results. Supplementary video: https://cloud.cps.unileoben.ac.at/index.php/s/fRE9XMosWDtJ339 ). Abstract | Links | BibTeX | Tags: Autonomous Navigation, Large Language Models @inproceedings{Nwankwo2024,In recent years, autonomous agents have surged in real-world environments such as our homes, offices, and public spaces. However, natural human-robot interaction remains a key challenge. In this paper, we introduce an approach that synergistically exploits the capabilities of large language models (LLMs) and multimodal vision-language models (VLMs) to enable humans to interact naturally with autonomous robots through conversational dialogue. We leveraged the LLMs to decode the high-level natural language instructions from humans and abstract them into precise robot actionable commands or queries. Further, we utilised the VLMs to provide a visual and semantic understanding of the robot's task environment. Our results with 99.13% command recognition accuracy and 97.96% commands execution success show that our approach can enhance human-robot interaction in real-world applications. The video demonstrations of this paper can be found at https://osf.io/wzyf6 and the code is available at our GitHub repository. |  |

2023 |

|

Yadav, Harsh; Xue, Honghu; Rudall, Yan; Bakr, Mohamed; Hein, Benedikt; Rueckert, Elmar; Nguyen, Ngoc Thinh Deep Reinforcement Learning for Mapless Navigation of Autonomous Mobile Robot Proceedings Article In: International Conference on System Theory, Control and Computing (ICSTCC), 2023, (October 11-13, 2023, Timisoara, Romania.). Links | BibTeX | Tags: Autonomous Navigation, Deep Learning, Reinforcement Learning @inproceedings{Yadav2023b, |  |

2022 |

|

Xue, Honghu; Song, Rui; Petzold, Julian; Hein, Benedikt; Hamann, Heiko; Rueckert, Elmar End-To-End Deep Reinforcement Learning for First-Person Pedestrian Visual Navigation in Urban Environments Proceedings Article In: International Conference on Humanoid Robots (Humanoids 2022), 2022. Abstract | Links | BibTeX | Tags: Autonomous Navigation, Deep Learning, mobile navigation @inproceedings{Xue2022b,We solve a visual navigation problem in an urban setting via deep reinforcement learning in an end-to-end manner. A major challenge of a first-person visual navigation problem lies in severe partial observability and sparse positive experiences of reaching the goal. To address partial observability, we propose a novel 3D-temporal convolutional network to encode sequential historical visual observations, its effectiveness is verified by comparing to a commonly-used frame-stacking approach. For sparse positive samples, we propose an improved automatic curriculum learning algorithm NavACL+, which proposes meaningful curricula starting from easy tasks and gradually generalizes to challenging ones. NavACL+ is shown to facilitate the learning process, greatly improve the task success rate on difficult tasks by at least 40% and offer enhanced generalization to different initial poses compared to training from a fixed initial pose and the original NavACL algorithm. |  |

Compact List without Images

Proceedings Articles |

Nwankwo, Linus; Ellensohn, Bjoern; Dave, Vedant; Hofer, Peter; Forstner, Jan; Villneuve, Marlene; Galler, Robert; Rueckert, Elmar EnvoDat: A Large-Scale Multisensory Dataset for Robotic Spatial Awareness and Semantic Reasoning in Heterogeneous Environments Proceedings Article In: IEEE International Conference on Robotics and Automation (ICRA 2025)., 2025. @inproceedings{Nwankwo2025, |

Nwankwo, Linus; Rueckert, Elmar The Conversation is the Command: Interacting with Real-World Autonomous Robots Through Natural Language Proceedings Article In: HRI '24: Companion of the 2024 ACM/IEEE International Conference on Human-Robot Interaction., pp. 808–812, ACM/IEEE Association for Computing Machinery, New York, NY, USA, 2024, ISBN: 9798400703232, (Published as late breaking results. Supplementary video: https://cloud.cps.unileoben.ac.at/index.php/s/fRE9XMosWDtJ339 ). @inproceedings{Nwankwo2024,In recent years, autonomous agents have surged in real-world environments such as our homes, offices, and public spaces. However, natural human-robot interaction remains a key challenge. In this paper, we introduce an approach that synergistically exploits the capabilities of large language models (LLMs) and multimodal vision-language models (VLMs) to enable humans to interact naturally with autonomous robots through conversational dialogue. We leveraged the LLMs to decode the high-level natural language instructions from humans and abstract them into precise robot actionable commands or queries. Further, we utilised the VLMs to provide a visual and semantic understanding of the robot's task environment. Our results with 99.13% command recognition accuracy and 97.96% commands execution success show that our approach can enhance human-robot interaction in real-world applications. The video demonstrations of this paper can be found at https://osf.io/wzyf6 and the code is available at our GitHub repository. |

Yadav, Harsh; Xue, Honghu; Rudall, Yan; Bakr, Mohamed; Hein, Benedikt; Rueckert, Elmar; Nguyen, Ngoc Thinh Deep Reinforcement Learning for Mapless Navigation of Autonomous Mobile Robot Proceedings Article In: International Conference on System Theory, Control and Computing (ICSTCC), 2023, (October 11-13, 2023, Timisoara, Romania.). @inproceedings{Yadav2023b, |

Xue, Honghu; Song, Rui; Petzold, Julian; Hein, Benedikt; Hamann, Heiko; Rueckert, Elmar End-To-End Deep Reinforcement Learning for First-Person Pedestrian Visual Navigation in Urban Environments Proceedings Article In: International Conference on Humanoid Robots (Humanoids 2022), 2022. @inproceedings{Xue2022b,We solve a visual navigation problem in an urban setting via deep reinforcement learning in an end-to-end manner. A major challenge of a first-person visual navigation problem lies in severe partial observability and sparse positive experiences of reaching the goal. To address partial observability, we propose a novel 3D-temporal convolutional network to encode sequential historical visual observations, its effectiveness is verified by comparing to a commonly-used frame-stacking approach. For sparse positive samples, we propose an improved automatic curriculum learning algorithm NavACL+, which proposes meaningful curricula starting from easy tasks and gradually generalizes to challenging ones. NavACL+ is shown to facilitate the learning process, greatly improve the task success rate on difficult tasks by at least 40% and offer enhanced generalization to different initial poses compared to training from a fixed initial pose and the original NavACL algorithm. |