Author: Elmar Rueckert

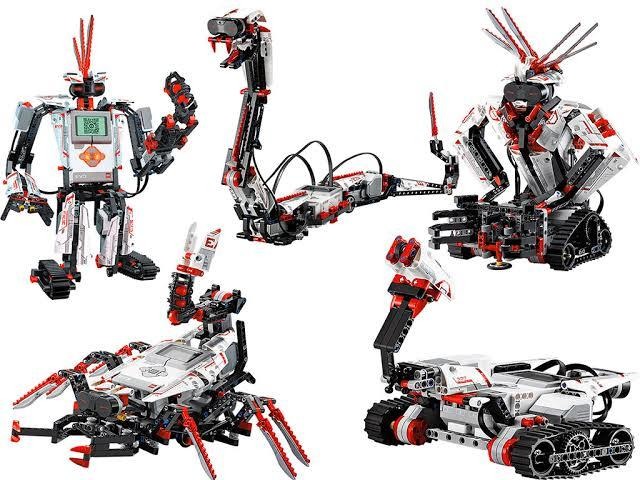

GitHub LEGO Robotic EV3 Python

Dieses open-source Projekt enthält Tools und Demos für die Python-Entwicklung mit den Lego Mindstorms EV3 und EV3Dev Bricks.

Die Inhalte sind verständlich aufbereitet und wir haben zahlreiche Tutorials und Aufgaben für Schüler*innen erstellt.

GitHub Code & Links

- https://github.com/ai-lab-science/LEGORoboticsPython

- Dieses Repository ist Teil unseres Lego Robotik Schüler*innen Projekts.

Details to the Software Development

Dieser Einführungsvortrag beschreibt die grundlegenden Schritte um einen LEGO Roboter zu bauen und mit Python zu programmieren.

Weitere Links und Tutorials

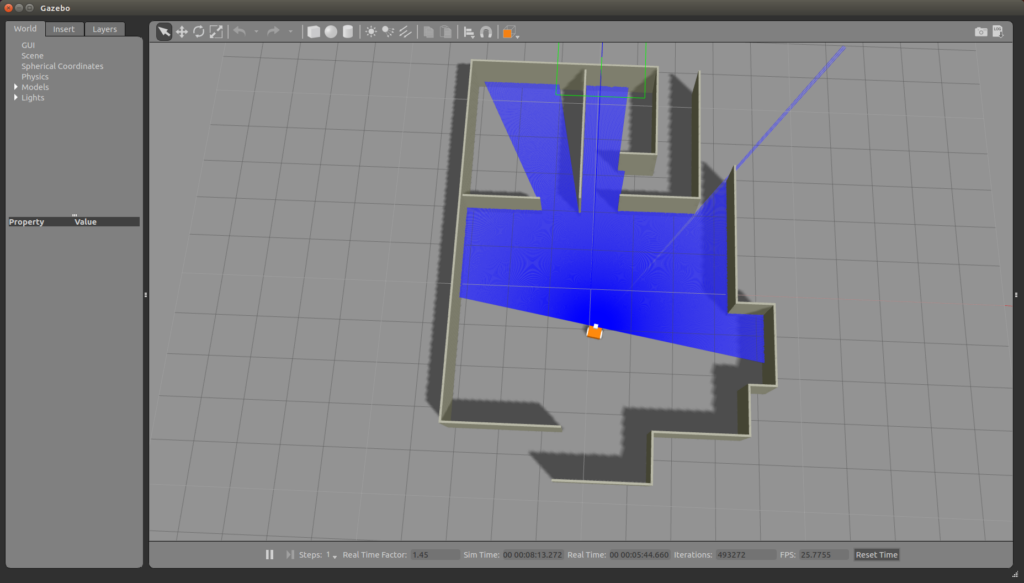

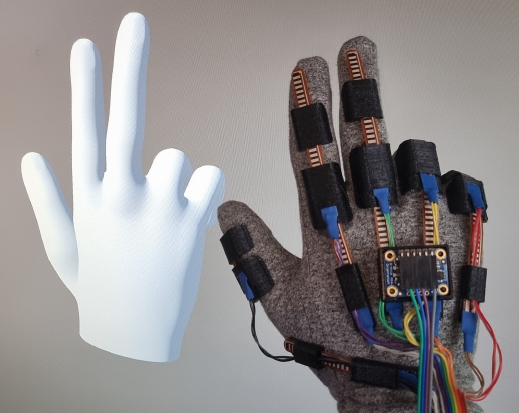

GitHub High-Accuracy Sensor Glove, ROS, Gazebo

Sensor gloves are gaining importance in tracking hand and finger movements in virtual reality applications as well as in scientific research. In this project, we developed a low-budget, yet accurate sensor glove system that uses flex sensors for fast and efficient motion tracking.

The contributions are ROS Interfaces, simulation models as well as motion modeling approaches.

GitHub Code & Links

- https://github.com/ai-lab-science/SensorGloves

- The software development is part of the TRAIN project.

- The development is based on prior sensor glove designs.

Details to the Software Development

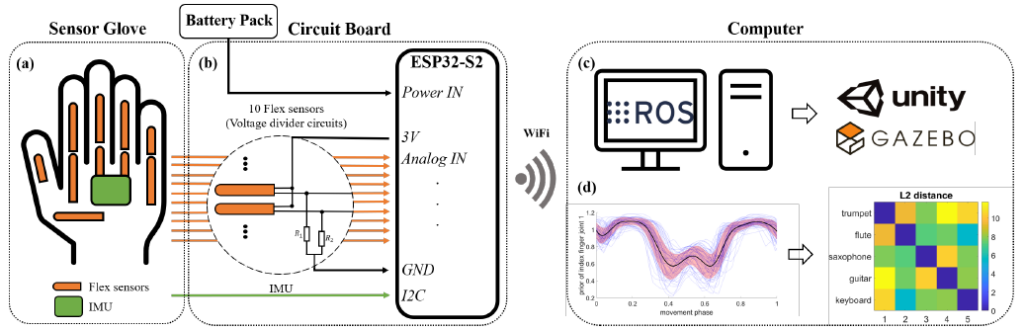

The figure shows a simplified schematic diagram of the system architecture for our sensor glove design:

(a) Glove layout with sensor placements, the orange fields denote the flex sensors, while the IMU is marked as a green rectangle,

(b) Circuit board which is wired with the sensor glove, has 10 voltage dividers for reading each flex sensor connected to ADC pins of the microcontoller ESP32-S2 and the IMU is connected to I2C pins,

(c) The ESP32-S2 sends the raw data via WiFi as ROS messages to the computer, which allows a real-time visualization in Unity or Gazebo,

(d) Post-processing of the recorded data, e.g. learning probabilistic movement models and searching for similarities.

Publications

A research publication by Robin Denz, Rabia Demirci, M. Ege Cansev, Adna Bliek, Philipp Beckerle, Elmar Rueckert and Nils Rottmann is currently under review.

190.003 CPS Research Seminar I (2SH SE, SS)

Univ.-Prof. Dr. Elmar Rueckert is organizing this research seminar. Topics include research in AI, machine and deep learning, robotics, cyber-physical-systems and process informatics.

Language:

English only

Are you an undergraduate, graduate, or doctoral student and want to learn more about AI?

This course will give you the opportunity to listen to research presentations of latest achievements. The target audience are non-experts. Thus no prior knowledge in AI is required.

To get the ECTS credits, you will select a research paper, read it and present it within the research seminar (10-15 min presentation). Instead of selecting a paper of our list, you can also suggest a paper. This suggestion has to be discussed with Univ.-Prof. Dr. Elmar Rueckert first.

After the presentation, the paper is discussed for 10-15 min.

Further, external presenters that are leading researchers in AI will be invited. External speakers will present their research in 30-45 min, followed by a 15 min discussion.

Location & Time

- Location: HS Kuppelwieser

- Dates: Thursdays 12:00-14:00, with some exceptions, see here the list of the dates.

List of Talks and Dates

The specified time windows do not include discussions.

- 26.04.24 11:15-12:45 HS TPT

- Univ.-Prof. Dr. Elmar Rueckert, Introductory Slides.

- Ph.D. research talk: Raphael Scharf on CFD Simulation of Textured Surfaces in Lubricated Sliding Contacts. Slides.

- Univ.-Prof. Dr. Elmar Rueckert, Introductory Slides.

- 03.05.24 11:15-12:45 HS TPT

- Ph.D. research talk: Vedant Dave on Multimodal Visual-Tactile Representation Learning through Self-Supervised Contrastive Pre-Training (ICRA 2024).

- 17.05.24 11:15-12:45 HS TPT

- Ph.D. research talk: Sahar Keshavarz on Real-Time Autonomous Decision-Making in Well Construction.

- Ph.D. research talk: Marcel Czipin on Data-Driven Approaches for Process Improvement in Wire-Arc Additive Manufacturing.

- 24.05.24 11:15-12:45 HS TPT

- CANCELED due to the LANGE Nacht der FORSCHUNG 2024

- CANCELED due to the LANGE Nacht der FORSCHUNG 2024

- 07.06.24 11:15-12:45 HS TPT

- Ph.D. research talk: Dipl.-Ing. Karin Ungerer on Vision-based Vibration Measurements.

- Ph.D. research talk: Dipl.-Ing. Nikolaus Feith on Integrating Human Expertise in Continuous Spaces: A Novel Interactive Bayesian Optimization Framework with Preference Expected Improvement (UR 2024).

- 14.06.24 11:15-12:45 HS TPT

- 21.06.24 11:15-12:45 HS TPT

- Ph.D. research talk: Dipl.-Ing. Melanie Neubauer on Semi-Autonomous Fast Object Segmentation and Tracking Tool for Industrial Applications (UR 2024).

- Ph.D. research talk: Dipl.-Ing. Fotios Lygerakis on M2CURL: Sample-Efficient Multimodal Reinforcement Learning via Self-Supervised Representation Learning for Robotic Manipulation (UR 2024).

- Ph.D. research talk: Dipl.-Ing. Nikolaus Feith on Advancing Interactive Robot Learning: A User Interface Leveraging Mixed Reality and Dual Quaternions (UR 2024).

- Template to be reused

- FREE

- M.Sc. thesis: XX on XX.

- B.Sc. thesis: XX on XX.

- Internship Project: XX on XX.

- Ph.D. research talk: XX on XX.

Some Research Paper Candidates

- Predictive Whittle Networks for Time Series by Yu et al. (UAI 2022)

- Natural Gradient Shared Control by Oh, Wu, Toussaint and Mainprice

- Learning to solve sequential physical reasoning problems from a scene image by Driess, Ha and Toussaint (IJRR 2021)

- Deep Visual Constraints: Neural Implicit Models for Manipulation

Planning from Visual Input by Ha, Driess and Toussaint - AW-Opt: Learning Robotic Skills with Imitation and

Reinforcement at Scale by Lu et al. (CoRL 2021) - Decision Transformer: Reinforcement Learning via Sequence Modeling (NeurIPS 2021) by L. Chen et al

- CURL: Contrastive Unsupervised Representations for Reinforcement Learning (ICML 2020) by A. Srinivas et al

- Offline-to-Online Reinforcement Learning via Balanced Replay and Pessimistic Q-Ensemble (CoRL 2021) by S. Lee et al

- Offline Meta-Reinforcement Learning with Online Self-Supervision by Pong et al. (ICML 2022)

- Information is Power: Intrinsic Control via Information Capture by Rhinehart et al. (NeurIPS 2021)

- Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks by Finn et al.

- Event-based Asynchronous Sparse Convolutional Networks by Messikommer et al.

- Gaussian-binary Restricted Boltzmann Machines on Modeling Natural Image Statistics by Wang et al.

- A Spiking Neural Network Model of Depth from Defocus for Event-based Neuromorphic Vision by Haessig et al.

- Dream to Control: Learning Behaviors by Latent Imagination by Hafner et al.

Humanoid Robotics Exercise (RO5300)

Univ.-Prof. Dr. Elmar Rueckert was teaching this course at the University of Luebeck in 2018, 2019 and 2020.

Teaching Assistant:

Nils Rottmann, M.Sc.

Language:

English and German

Course Details

On this page you can find short videos which explain the exercise 02 given in the lecture Humanoid Robotics.

Datenstrukturen und Algorithmen (708.031)

Univ.-Prof. Dr. Elmar Rueckert was teaching this course at the Technical University Graz in the winter semester in 2012/13 and in 2013/14.

Language:

German only

Link to the university's course page

Link to the course in the TUG online system.

Course Details

- Elementare Datenstrukturen (Felder, Stapel, Schlange).

- Asymptotische Laufzeitanalyse von Programmen (O-Notation).

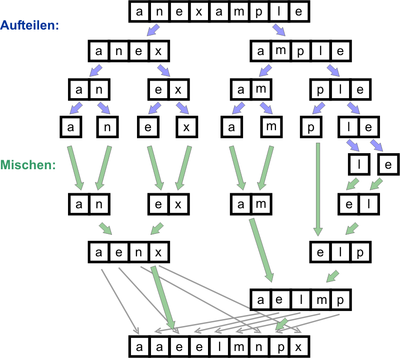

- Sortierverfahren (Einfügen, Auswahl, Quicksort, Mergesort, Heapsort, Fachverteilung, i-größte Zahl, Randomisierung, untere Laufzeitschranken).

- Gestreute Speicherung (Hashing; Überläuferlisten, offene Adressierung, Hashfunktionen).

- Suchmethoden (sequentiell, binär, interpolativ, quadratische Binärsuche).

- Baumstrukturen (Binärbäume, (a-b)-Bäume, amortisierte Umstrukturierungskosten, optimale Suchbäume).

- Dynamische Datenverwaltung (Wörterbuchproblem, Warteschlangenproblem, Union-Find Problem).

- Algorithmische Techniken (Inkrementelles Einfügen, Elimination, Divide & Conquer, dynamisches Programmieren, Randomisierung).

Mit bis zu 390 Teilnehmern*innen pro Vorlesung.

Literature

- Cormen, Leiserson, Rivest: Introduction to Algorithms, MIT Press, London, 1990.

Probabilistic Learning for Robotics (RO5601)

Univ.-Prof. Dr. Elmar Rueckert was teaching this course at the University of Luebeck in the winter semester in 2018.

Language:

English only

Course Details

In accompanying exercises and hands on tutorials the students will experiment with state of the art machine learning methods and robotic simulation tools. In particular, Mathworks’ MATLAB, the robot middleware ROS and the simulation tool V-Rep will be used. The exercises and tutorials will also take place in the seminar room 2.132 on selected Fridays (see the course materials and dates below).

Prerequisites (recommended)

- Humanoid Robotics (RO5300)

- Robotics (CS2500)

Follow this link to register for the course: https://moodle.uni-luebeck.de/course/view.php?id=3793.

Location & Time: Room: Seminarraum Informatik 5 (Von Neumann) 2.132 12.15 – 14.00

Course materials and dates

- Probabilistic Learning for Robotics Intro (L1: October, 18th)

- Introductions to Topics I-III: Bayesian Inference, Gaussian Processes & Kalman/P. Filters (L2: October, 25th)

- Introductions to Topics IV-VI: Bayesian Optimization, Spiking Networks for Planning, Probabilistic Movement Primitives (L3: November, 1st)

RAS Research Seminar

Univ.-Prof. Dr. Elmar Rueckert was teaching this course at the University of Luebeck in 2018, 2019 and 2020.

Language:

English only

Course Details

In this research seminar we discuss state of the art research topics in robotics, machine learning and autonomous systems. Presenters are invited guest speakers, researcher, and graduate and under graduate students.

The seminar takes place on Fridays where in

- WS2018/19 we meet in our Seminarraum Informatik 2/3 (Cook/Karp) at 1 pm s.t.

- SS2019 we meet in our Seminarraum Mathematik 2 ( Banach ) at 11 am s.t.

Here is the event calendar with upcoming talks. Click on this link to add this calendar to your account. You may register for this course via moodle.

Probabilistic Machine Learning (RO5101 T)

Univ.-Prof. Dr. Elmar Rueckert was teaching this course at the University of Luebeck in the winter semester in the years 2019 and 2020.

Teaching Assistant:

Nils Rottmann, M.Sc.

Language:

English only

Course Details

Follow this link to register for the course: https://moodle.uni-luebeck.de

Some remarks on the UzL Module idea: The lecture Probabilistic Machine Learning belongs to the Module Robot Learning (RO4100). In the winter semester, Prof. Dr. Elmar Rueckert is teaching the course Probabilistic Machine Learning (RO5101 T). In the summer semester, Prof. Dr. Elmar Rueckert is teaching the course Reinforcement Learning (RO5102 T). Important: Due to the study regulations, students have to attend both lectures to receive a final grade. Thus, there will be only a single written exam for both lectures. You can register for the written exam at the end of a semester.

Important dates

- Written exam: 04. February 2021 (2nd appointment 04.03.2021)

- Assignment I: Freitag, 11. Dezember 2020, 23:00

- Assignment II: Freitag, 22. Januar 2021, 23:00

The course topics are

- Introduction to Probability Theory (Statistics refresher, Bayes Theorem, Common Probability distributions, Gaussian Calculus).

- Linear Probabilistic Regression (Linear models, Maximum Likelihood, Bayes & Logistic Regression).

- Nonlinear Probabilistic Regression (Radial basis function networks, Gaussian Processes, Recent research results in Robotic Movement Primitives, Hierarchical Bayesian & Mixture Models).

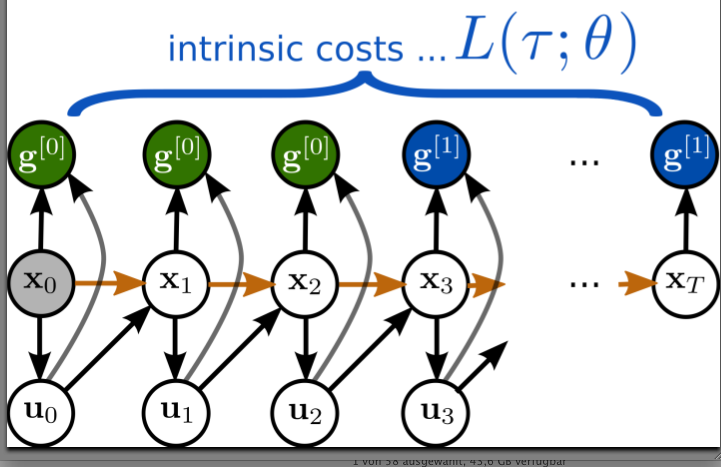

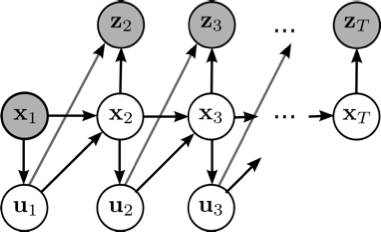

- Probabilistic Inference for Filtering, Smoothing and Planning (Classic, Extended & Unscented Kalman Filters, Particle Filters, Gibbs Sampling, Recent research results in Neural Planning).

- Probabilistic Optimization (Stochastic black-box Optimizer Covariance Matrix Analysis Evolutionary Strategies & Natural Evolutionary Strategies, Bayesian Optimization).

The learning objectives / qualifications are

- Students get a comprehensive understanding of basic probability theory concepts and methods.

- Students learn to analyze the challenges in a task and to identify promising machine learning approaches.

- Students will understand the difference between deterministic and probabilistic algorithms and can define underlying assumptions and requirements.

- Students understand and can apply advanced regression, inference and optimization techniques to real world problems.

- Students know how to analyze the models’ results, improve the model parameters and can interpret the model predictions and their relevance.

- Students understand how the basic concepts are used in current state-of-the-art research in robot movement primitive learning and in neural planning.

Location & times

- Lectures Thursdays, 12:15-13:45 Virtual Lecture using the WEBEX Room of Elmar Rueckert.

- Q & A Session on Mondays ,12:15-13:45, virtual using the WEBEX room of Nils Rottmann. The session will be closed if no questions where asked till 12:20.

Interactive Online Lectures

In the lecture, Prof. Rueckert is using a self made lightboard to ensure an interactive and professional teaching environment. Have a look at the post on how to build such a lightboard. Here is an example recording.

Requirements

Strong statistical and mathematical knowledge is required beforehand. It is highly recommended to attend the course Humanoid Robotics (RO5300) prior to attending this course. The students will also experiment with state-of-the-art machine learning methods and robotic simulation tools which require strong programming skills.

Grading

The course is accompanied by two written assignments. Both assignments have to be passed as requirement to attend the written exam. Details will be presented in the first course unit on October the 22nd, 2020.

Materials for the Exercise

The course is accompanied by three graded assignments on Probabilistic Regression, Probabilistic Inference and on Probabilistic Optimization. The assignments will include algorithmic implementations in Matlab, Python or C++ and will be presented during the exercise sessions. The Robot Operating System (ROS) will also be part in some assignments as well as the simulation environment Gazebo. To experiment with state-of-the-art robot control and learning methods Mathworks’ MATLAB will be used. If you do not have it installed yet, please follow the instructions of our IT-Service Center.

Literature

- Daphne Koller, Nir Friedman. Probabilistic Graphical Models: Principles and Techniques. ISBN 978-0-262-01319-2

- Christopher M. Bishop. Pattern Recognition and Machine Learning. Springer (2006). ISBN 978-0-387-31073-2.

- David Barber. Bayesian Reasoning and Machine Learning, Cambridge University Press (2012). ISBN 978-0-521-51814-7.

- Kevin P. Murphy. Machine Learning: A Probabilistic Perspective. ISBN 978-0-262-01802-9

Reinforcement Learning (RO4100 T)

Univ.-Prof. Dr. Elmar Rueckert was teaching this course at the University of Luebeck in the summer semester 2020.

Teaching Assistant:

Honghu Xue, M.Sc.

Language:

English only

Course Details

The lecture Reinforcement Learning belongs to the Module Robot Learning (RO4100).

In the winter semester, Prof. Dr. Elmar Rueckert is teaching the course Probabilistic Machine Learning – PML (RO5101 T).

In the summer semester, Prof. Dr. Elmar Rueckert is teaching the course Reinforcement Learning – RL (RO4100 T).

Important Remarks

- Students will receive a single grade for the Module Robot Learning (RO4100) based on the average grade of PML and RL (rounded down in favor of the students).

- This course is organized through online lectures and exercises. Details to the organizations will be discussed in our

FIRST MEETING: 17.04.2020 12:15-13:45

using the WEBEX tool. Please follow the instructions of the ITSC here to setup your computer. Click on the links to create a google calendar event, joint the WEBEX meeting or to access the online slides.

Dates & Times of the Online Webex Meetings

- Lectures are organized on FRIDAYS, 12:15-13:45, WEBEX Link

- Exercises are organized on THURSDAYS, 09:15-10:00, WEBEX Link

Course description

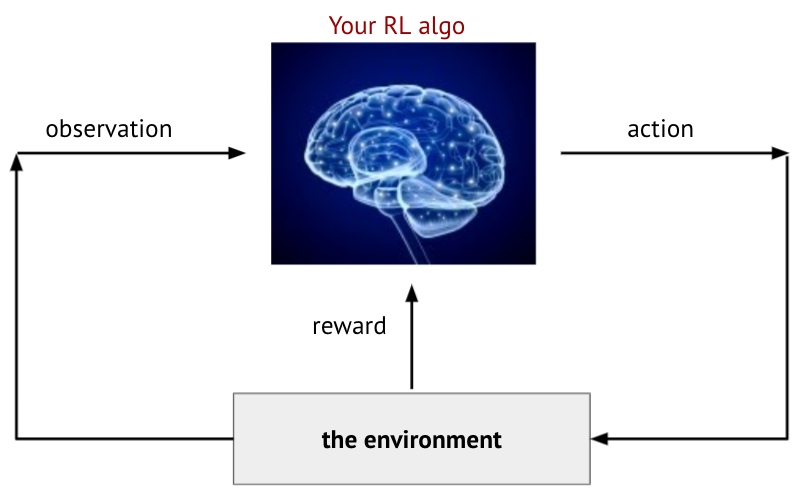

- Introduction to Robotics and Reinforcement Learning (Refresher on Robotics, kinematics, model learning and learning feedback control strategies).

- Foundations of Decision Making (Reward Hypothesis, Markov Property, Markov Reward Process, Value Iteration, Markov Decision Process, Policy Iteration, Bellman Equation, Link to Optimal Control).

- Principles of Reinforcement Learning (Exploration and Exploitation strategies, On & Off-policy learning, model-free and model-based policy learning, Algorithmic principles: Q-Learning, SARSA, (Multi-step) TD-Learning, Eligibility Traces).

- Deep Reinforcement Learning (Introduction to Deep Networks, Stochastic Gradient Descent, Function Approximation, Fitted Q-Iteration, (Double) Deep Q-Learning, Policy-Gradient approaches, Recent research results in Stochastic Deep Neural Networks).

The learning objectives / qualifications are

- Students get a comprehensive understanding of basic decision making theories, assumptions and methods.

- Students learn to analyze the challenges in a reinforcement learning application and to identify promising learning approaches.

- Students will understand the difference between deterministic and probabilistic policies and can define underlying assumptions and requirements for learning them.

- Students understand and can apply advanced policy gradient methods to real world problems.

- Students know how to analyze the learning results and improve the policy learner parameters.

- Students understand how the basic concepts are used in current state of the art research in robot reinforcement learning and in deep neural networks.

Follow this link to register for the course: https://moodle.uni-luebeck.de

Requirements

Basic knowledge in Machine Learning and Neural Networks is required. It is highly recommended to attend any of (but not restricted to) the following courses Probabilistic Machine Learning (RO 5101 T), Artificial Intelligence II (CS 5204 T), Machine Learning (CS 5450), Medical Deep Learning (CS 4374) prior to attending this course. The students will also experiment with state-of-the-art Reinforcement Learning (RL) methods on benchmark RL simulator (OpenAI Gym, Pybullet), which requires strong Python programming skills and knowledge on Pytorch is preferred. All assignment related materials have been tested on a windows machine (Win10 platform).

Grading

The course grades will be computed solely from submitted student reports of six assignments. The reports and the code have to be submitted (one report per team) to xue@rob.uni-luebeck.de. Please note the list of dates and deadlines below. Each assignment has minimally two-week deadline, some of them are of longer duration.

Please use Latex for writing your report.

Bonus Points

tudents can get Bonus Points (BP) during the lectures when all quiz questions are correctly answered (1 BP per lecture). In the assignments, BPs will be given to the students when optional (and often also challenging) tasks are implemented and discussed.

Materials for the Exercise

The course is accompanied by pieces of course work on policy search for discrete state and action spaces (grid world example), policy learning in continuous spaces using function approximations and policy gradient methods in challenging simulated robotic tasks. The theoretical assignment questions are based on the lecture and also on the first three literature sources listed above. It is strongly recommended to read (or watch) these material in parallel to attending lecture. The assignments will include both written tasks and algorithmic implementations in Python. The tasks will be presented during the exercise sessions. As simulation environment, the OpenAI Gym platform will be used in the project works.

Literature

- Richard S. Sutton, Andrew Barto: Reinforcement Learning: An Introduction second edition. The MIT Press Cambridge, Massachusetts London, England, 2018. Link to the online book (PDF)

- David Silver’s Reinforcement Learning online lecture series. Link to the online video and script

- Sergey Levine’s Deep Reinforcement Learning online lecture series. Link to the online video, Link to the script

- Csaba Szepesvri: Algorithms for Reinforcement Learning. Morgan & Claypool in July 2010.

- B. Siciliano, L. Sciavicco: Robotics: Modelling,Planning and Control, Springer, 2009.

- Puterman, Martin L. Markov decision processes: discrete stochastic dynamic programming. John Wiley & Sons, 2014.

- Szepesvari, Csaba. Algorithms for reinforcement learning (synthesis lectures on artificial intelligence and machine learning). Morgan and Claypool (2010).